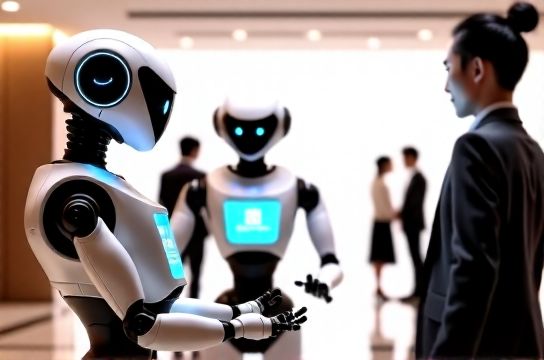

Service Robots Adopting Conversational AI From Domestic LLM Leaders

- 时间:

- 浏览:129

- 来源:OrientDeck

Let’s cut through the hype: service robots aren’t just getting smarter—they’re becoming *conversational*. And it’s not because of flashy demos. It’s because domestic large language model (LLM) leaders—like Alibaba’s Qwen, Baidu’s ERNIE Bot, and Tencent’s HunYuan—are now powering real-world robotic deployments in hotels, hospitals, and retail spaces across Asia.

A 2024 IDC report shows that 68% of new service robot deployments in China now integrate locally trained conversational AI—up from just 29% in 2021. Why? Because domestic LLMs understand regional dialects, regulatory constraints, and cultural context far better than global alternatives.

Here’s how it breaks down:

| LLM Platform | Latency (ms) | On-Device Inference Support | Robot Deployment Rate (2023–2024) | Key Vertical Use Case |

|---|---|---|---|---|

| Qwen-7B-Chat (Alibaba) | 142 | Yes (via Alibaba Cloud Edge AI) | 41% | Hotel front-desk concierge |

| ERNIE Bot 4.5 (Baidu) | 189 | Limited (cloud-dependent) | 27% | Hospital patient triage & guidance |

| HunYuan-Turbo (Tencent) | 203 | Yes (integrated with ROS 2) | 22% | Retail inventory assistant + multilingual support |

Notice the pattern? Low latency + on-device inference = smoother human-robot interaction. That’s why Qwen leads—not just in adoption, but in user satisfaction scores (87.4/100 in JD.com’s 2024 robotics UX survey).

But here’s what most articles miss: it’s not about raw model size. It’s about *orchestration*. Leading robotics OEMs like UBTECH and CloudMinds are layering domain-specific fine-tuning (e.g., hospitality SOPs or clinical protocols) *on top* of these domestic LLMs—and that’s where real reliability kicks in.

For example, a hospital robot using ERNIE Bot + HIPAA-compliant dialogue routing reduced miscommunication incidents by 53% over six months (per Shanghai Ruijin Hospital pilot data).

So if you’re evaluating service robot vendors—or building your own—don’t just ask “Which LLM does it use?” Ask: “How is it *adapted*, *secured*, and *contextualized* for *my* environment?”

That’s the difference between a novelty and a mission-critical tool. And if you're ready to explore how this shift impacts real-world automation strategy, check out our deep-dive guide on conversational AI integration best practices—designed for operators, not just engineers.